CTO

Sept 2022 – PresentI was brought in as CTO to give the company more structure around product and technical direction. I had already been pushing for us to think more clearly about where the company could go next, so we spent a lot of time turning broad ideas into concrete options, running workshops, and then properly prototyping the most promising ones. The first of those was a volumetric messaging prototype where I reshuffled resources into two teams, one building the underlying volumetric technology and the other using it to build the project itself in Unity. It was finished on time in just two fortnightly sprints, which at that point was the first time a project at LIV had actually landed on schedule.

Early in the role, my other priority was making the tech team operate more effectively as the company continued to grow. To achieve this, I cut down meeting overhead, introduced smarter hierarchies, and made sure that those actually leading projects were responsible for them. I hired heavily, bringing in strong developers and leads where I could and promoting internally when it made sense. I also spent considerable time mentoring, reviewing, debugging, and filling gaps wherever the company still needed support.

On the technical side, I was still spread across most of the stack. I worked on volumetric capture patents and kept them alive with continuations, introduced more WebRTC into the company, and spent a lot of time improving CI/CD and infrastructure that was mostly held together by hope. That meant dealing with Kubernetes, release automation, build pipelines, versioning, code signing, telemetry, analytics, and a lot of the underlying systems work needed to make shipping more predictable.

This was also the period where I pushed hard for more Rust in the stack. There was resistance at first, but over time it became clear that it gave us a much safer foundation than the amount of brittle C++ we had accumulated.

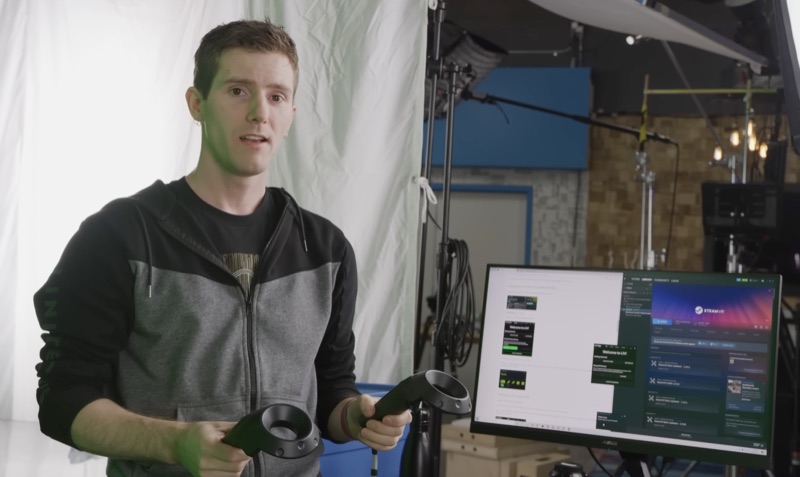

By 2024 my role had evolved to become external as well as internal. That meant handling partner relationships, delivering technical demos, and providing technical and legal detail to support larger deals. The most important of those was the Meta partnership, which I helped secure by negotiating the commercial elements, working through the contract to ensure LIV retained its IP entirely, scoping the technical work, and managing a purpose-built team to deliver each milestone on time. My work in this period helped shape LIV into the official capture solution for Quest, while also giving us the space to keep investing in products like LCK and other internal prototypes rather than focusing solely on one deal.

LCK is a good example of what that setup enabled. It was a new product built under my direction, similar in some ways to LIV but aimed at Quest, without mixed reality capture, and with an in-game tablet UI that gave players context and controls inside the experience itself. Because I had evolved the team, release process, QA, and infrastructure into a much stronger place, we were able to ship it cleanly, keep releases moving, and support external developers properly. Gorilla Tag alone peaked at roughly a million unique devices in December 2024, with around 23.8 million sessions in a single month, proving that the product had landed well.

Since then, I have pushed for a more disciplined adoption of AI. Today, LIV uses it to help write code, but with the expectation that the code still has to be understandable, reviewed, and tested properly. It has also helped us get through large amounts of infrastructure and CI/CD work that we had wanted to address for a long time but had never quite found the capacity for. Beyond that, it has become a useful addition to QA and support, speeding up diagnosis and triage without pretending the model is magically correct on its own.

Product Manager

Mar – Sept 2022We had a volumetric capture prototype internally that everyone loved, but at that stage it was still more of a convincing demo than a product. I was asked to move into product management to figure out what it could actually become: what we should capture, how we should capture it, where it should run, how it should be transmitted, and what kind of experience would make it useful rather than just technically impressive.

A big part of that was bringing in Omar Mohammed Ali Mudhir, who I had worked with before at Framestore and trusted on the video side. Early versions of the volumetric capture demo relied on image sequences that ran into multiple gigabytes for a few seconds of content, which clearly was not going to survive contact with reality. Working together we got that down dramatically by encoding the sequences into video, cutting short clips down into something more like tens of megabytes rather than gigabytes depending on the setup, and that changed the viability of the whole thing.

Figuring that if it could run in WebXR on a browser, we would likely be able to run it anywhere anyway, that became a useful direction for us and gave us much broader distribution than keeping it locked to a native VR app. Omar proposed compiling our custom ffmpeg-based player to WASM so we could use the same core playback approach on the web, and that worked surprisingly well. We got a browser version running at framerate, including on mobile, but it was obvious we were right on the edge of what the platform could support at the time and that key things like WebGPU and mature WebCodecs support arriving later would have made a big difference. Even where the product direction shifted, the work was important: it fed into patent work, directly informed later LCK work, and started my long-term desire to get rid of ffmpeg as a dependency wherever possible.

Unreal Engine & LIV App

Nov 2020 – Mar 2022I came into LIV to take over the Unreal Engine SDK, which at that point was pretty minimal and had not been meaningfully touched in a long while. I rebuilt it into something we could actually maintain, carrying over the useful rendering tricks from the original plugin but reworking the structure based on a lot of experience building plugins at Framestore. Later I brought in an RDG-based implementation and new capture techniques that made it significantly more efficient.

Testing any of this properly was slow because it usually meant setting up a full mixed reality capture rig, so wherever I could I built commands, helper tools, and test scenes to make that less painful. I relied a lot on small purpose-built levels for checking specific problems, especially around camera movement and attachment, because certain approaches would stutter badly under the wrong conditions. I also ended up debugging right through the stack, from Unreal into the IPC layer we used for data and textures and back up into the LIV App. By the time the SDK was in a genuinely solid state, a few of the remaining problems were really engine issues rather than LIV issues, so I used old Framestore contacts at Epic to get us Enterprise support, worked with them on reproducing bugs, and pushed fixes where we could.

I also wanted the SDK to be better to use, not just technically better under the hood. I added Slate UI to catch common misconfigurations, worked on automated versioning and release flow so tagged builds could be published to S3 and surfaced through our developer portal, and helped set up editor-side polling and OAuth-based setup so developers could get going with less friction. From there the scope widened into the LIV App, Unity SDK, telemetry, analytics, and infrastructure. By the end I had worked across basically every part of the stack, from low-level graphics and capture code through to React, CI/CD, and the internal systems we used to ship and support the product.

Acute Art

Acute Art

At Acute Art I was the lead engineer on VR projects made with contemporary artists. The work sat somewhere between software engineering, technical art, and production, which meant constantly translating between creative intent and the practical constraints of building the thing.

I led Unreal development, worked in Houdini, mentored junior developers, and set up pipelines and CI/CD so artists and engineers could both work properly. A fair amount of the job was removing friction between those two groups before it turned into schedule problems.

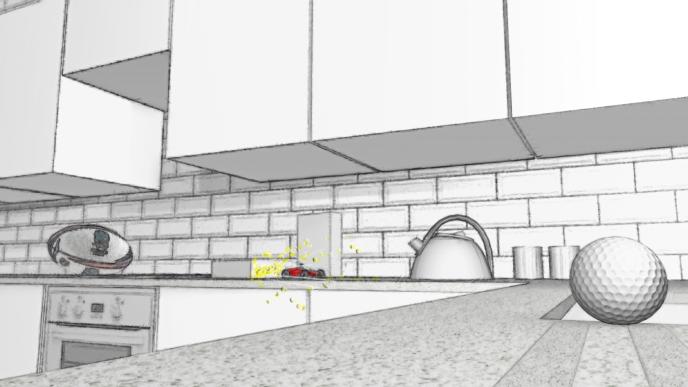

Cao Fei: The Eternal Wave

The Eternal Wave was Cao Fei's first VR work, shown as part of Blueprints at the Serpentine. It drew on her wider work around Beijing's Hongxia district and started from a reconstruction of her studio kitchen before drifting off into something much stranger. The piece had quite a specific visual language, tied partly to Nova and partly to her broader interest in memory, industry, and virtual space.

My side of it was the technical build in Unreal, with a strong art and technical-art component. We were aiming for something quite photoreal rather than obviously 'game engine', which meant a lot of work bringing complex Houdini simulations into the project, building in-engine effects, and doing the usual fiddly technical-art work needed to make everything hold together visually while still running properly in a headset.

Framestore

Framestore

Framestore is where most of my realtime and VR work really got going. I spent several years building immersive projects in Unreal and Unity, covering engine work, gameplay, rendering, shaders, build systems, video, input, tools, and the usual collection of adjacent problems that come with trying to ship ambitious things on deadline.

Later I moved into a Lead Programmer role, where I split time between delivery work and internal R&D. That included research on bringing film-grade rigs into Unreal with a neural-net solver, which was exactly as fiddly as it sounds.

Dauntless Fear Simulator

Dec 2018 – Jun 2019Dauntless was a location-based VR attraction for the Lionsgate theme park in Zhuhai, built around backpack PCs, headsets, OptiTrack body tracking, and two-player sessions in a large physical space. Halfway through the project the Montreal team's lead developer left, and I was asked to go over there, take over, and see it through delivery.

The team itself was good and the project already had a strong visual and technical foundation, but there was still work to do to get it ready for the realities of running in a theme park. Luckily we also had a dedicated testing room and a full-time QA running tests and producing reports every day, and that constant feedback was incredibly helpful in keeping the build solid while we closed things out.

The biggest risk was not the VR content itself but the operations and networking around it. Two players at a time had to be geared up, connected to the correct server, moved through the experience, and then handed off cleanly so the next pair could start. I built the software around that problem: a lobby plus multiple dedicated servers, a tablet app operators could use to assign and move player pairs between instances, and a separate control-room tool for monitoring sessions, troubleshooting issues, and opening voice comms to guide players if they got lost. The installation went well on site, the operators were trained on the systems, and I got the feedback that the ride was running smoothly a few months later when the park officially opened.

Volkswagen: Hyper Reality Test Drive

May – Oct 2018This project was wild both technically and visually. It was an Unreal job, and probably one of the most striking things I worked on at Framestore: it began in a calm utopian landscape before throwing you through a wormhole into a Tron-like city of neon geometry. We used Houdini heavily for explosions, rubble, and some of the more unusual rendering tricks, including processing geometry from the CG team so we could do shader work that sold the glowing polygonal look properly.

The more difficult part was that the experience happened in a real car being driven by a stunt driver, hitting around 100 mph on the straights and climbing a steep ramp over stacked freight containers, while the virtual world had to stay locked to it. The car was tracked by a highly accurate GPS setup using a static base station, but for most of the project we did not have the car or the tracking kit on hand. What we had was an Unreal plugin full of comments in Chinese and a recorded data dump from a test run in Beijing. I had to reverse engineer what the plugin was doing, learn enough about Mercator projection to rebuild the movement inside the engine, and then build tooling on top of that so we could script events against the car's live position. We also scaled movement by ten, so every real metre of motion became ten metres in the virtual world.

That had a lot of knock-on effects for production. The track layout kept changing, so the world had to be built modularly and I ended up adding an editor component that let us drive the car live through the Unreal scene to check clipping and nudge assets into place. Up until the day before launch I was still in the boot of a car with Unreal open while someone drove the course. Somewhere in all of that I also slightly crashed a car in Beijing, had to use Perforce over 4G from the back of a moving vehicle, and had to evacuate the Zhuhai site because of an incoming super typhoon. In spite of all that, the project shipped on time and was completely insane in the best way.

Samsung: A Moon For All Mankind

Oct 2017 – Jul 2018This project was genuinely incredible to work on, and I was happy to have led development on it. For Samsung we commissioned huge low-gravity simulator rigs like the ones NASA uses to train astronauts, formed a Space Act agreement with NASA, and got their approval on the project. The rig would lift each user to calibrate to their weight and then lower them slowly as they jumped to match the feel of lunar gravity. We took that motion data and fed it into the Gear VR experience so people could jump around and explore a virtual, but accurate, representation of the moon.

From a rendering and pipeline point of view it was a difficult project. This was Unity on mobile VR, which meant getting a highly detailed lunar environment to run well on Gear VR hardware without it falling apart. The terrain was built from real lunar photography and depth data, and it took several rounds of optimisation to get it into shape. We were also starting to make heavier use of Houdini sims via vertex animation textures, so I built ways to simplify the export and import process and optimise the data enough that technical artists could iterate on the effects quickly and test them properly in engine.

The bit I was most pleased with was the rover behaviour. The experience needed to feel open enough that people could bounce around and enjoy being on the moon, but it also had to guide them toward the main narrative beats, especially the climb up to the ridge where you look out over the crater and see the Earth hanging in the sky. A simple spline-following rover looked wrong and did not solve the design problem, so I worked with rigging to get a proper setup in place and drove it with a rigid-body vehicle simulation with suspension. I spent a lot of time tuning the physics, then built behaviour that adjusted acceleration and steering dynamically based on the player's position so the rover would stay ahead, wait when needed, and still transition into scripted but physically driven moments cleanly.

It was a fantastic project to work on. I do not miss having to wear the harness and test it every day though.

Sky: 4DVR

Mar – Aug 2017Sky4DVR was the first project I properly led at Framestore. It was based around the Sky living room from the Idris Elba adverts, with two main VR experiences themed around Game of Thrones and Formula 1, and it forced me to get comfortable very quickly with being the person who had to make all the moving parts line up. At the start I was largely leading a team made up of technical artists, then later took on an intern developer who I mentored and who quickly proved themselves.

A lot of the difficulty was in working with the CG department. The film and TV side would naturally hand over assets built for offline rendering, so we were constantly dealing with meshes that were far too heavy, too many UV sets, and rigs that either would not export cleanly or would perform terribly in Unreal. I spent a lot of time with artists and technical artists improving the pipeline, setting clearer expectations, and helping them adapt their work for real-time constraints.

Audio had usually been handled internally and, bluntly, never very well. On this project we worked with a sound house across the road instead, which meant giving them Perforce access, helping them get comfortable with version control, and supporting them with gameplay scripting so their work actually integrated cleanly into the build. That extra effort paid off because the final result was easily the best audio we had shipped on one of these experiences.

The installation itself had plenty of physical systems wrapped around it, and I built the control layer that drove them. The floor rumbled using a mix of live audio and synthetic wave data, giant fans kicked in as Formula 1 cars passed by, and Dyson hairdryers blasted hot air when a dragon breathed fire, using flame sims exported from Houdini in multiple layers and reconstructed in Unreal. A lot of the work was in timing and orchestration so the environmental effects felt properly connected to the VR rather than like a set of props firing off nearby.

Fantastic Beasts VR

For the launch of Google Daydream we built a tie-in title for Fantastic Beasts and Where to Find Them. Framestore's film department was already deep into the movie, which meant we had access to film-grade assets and could build something that felt much closer to the source material than a typical mobile VR project.

The most interesting technical work was how we handled the creatures. Rather than playing back full 360 stereo video, we used 4K stereo latlong renders for the environments and layered stereo video patches of the beasts over the top, using camera metadata to reconstruct them correctly in Unity. That let us keep much higher detail where it mattered instead of wasting most of each frame on background pixels that barely changed. Making the interactive moments work cleanly took a lot of iteration, especially around animation timing and video transitions so the beasts could move from idle loops into interaction beats without obvious seams.

My main focus was gameplay programming for the shed minigames and interactive sequences, working closely with technical art to make the whole thing feel tactile rather than just cinematic. It was a good project for learning how rendering tricks, video systems, animation constraints, and game feel all have to line up if you want mobile VR to feel polished.

University of Bath

University of Bath

Freelance

CMS & Web Development

Before the VR work I spent about a decade doing freelance web design and development. I started at around eleven and was getting paid by thirteen, which meant a fairly traditional diet of PHP, MySQL, front-end work, and trying to keep browsers of the period from doing anything too inventive.

Most of the projects were CMS-driven sites, so the work covered both front-end and back-end development and a lot of practical client work besides. It was a useful way to learn by building real things for real people, even if parts of that era are best left in the past.